Image analysis and processing

From Wiki CEINGE

Image deconvolution in optical microscopy

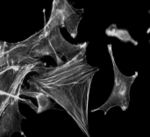

Three-dimensional optical microscopy is nowdays an efficient tool for investigation of living biological samples. Using optical sectioning technique, a stack of 2D images is obtained. However, due to the nature of the Optical (system) Transfer Function (OTF) and non-optimal experimental conditions, acquired raw data are usually sensitive to some distorsions. In order to carry out biological analysis, raw data have to be restored. The images, obtained by a conventional fluorescence microscope, contain light from the all 3D sample-object. What is actually present in the focal plane may be blurred by “out –of -focus” fluorescence. The reduction of this effect is carried out using a unix program developed in house to execute the computational deconvolution process. The characterisation of the out-of focus light is based on the 3D image of one point as source, called Point Spread Function (PSF.). The PSF can be determined either experimentally or theoretically and both methods are followed in order to have agreement. For the experimental approach, a fluorescent microsphere is used as point source. As best approximation, a microsphere having the diameter of about one-third resolution size limit expected for the microscope objective used has been chosen. For the mathematical-physical model, the PSF is assumed to have circular symmetry and is defined by few parameters, such as the numerical aperture and magnification of each objective, the wavelenght of the fuorescent light. Deconvolution algorithms attempt to reassign blurred light to its location. This is performed by reversing the convolution operation of the object with the PSF.

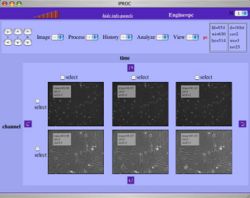

IPROC a web-system to visualize and process biological images

Digital images are in widespread use for basic research in cell and molecular biology as well as for laboratory and clinical diagnosis. Fast optical sectioning techniques, in combination with timelapse-microscopy, create big multidimensional data sets, used to image the dynamics of biological events. The management of large number of images requires the use of databases (DB), while processing of the acquired images is often necessary to enhance the visibility of cell features, that would otherwise be hidden. Several image processing options, often designed to address specific biological requirements, are available as unix programs and libraries. Their integration into a web based image processing engine, accessible online by many concurrent users, promises to be at the same time a powerful and efficient solution to deal with images in a scientific environment. A combination of dynamic web pages, SQL database, web services and concurrent process scheduling, was used to create a system, IPROC, able to store images and have them processed interactively by a relatively large number of simultaneous users. Three independent modules cooperate to generate the interface and to insure processing, by coordinating web-page construction, image data retrieval, image processing and delivery. Processing is obtained by integrating a large library of different unix filters installed on the server, while interactivity is provided by the ability to quickly react to user input via small data requests. Filters are applied in sequence, reducing the need for temporary storage and allowing unlimited backstepping. PHP was used as the main language to build a number of objects, which together take care of obtaining images from a DB or from external files and applying the required processing steps. Wrapper objects take care of interfacing with different sets of image processing tools, available as command line programs or webservices. Adapter modules allow execution of different image processing steps in sequence. IPROC looks like and behaves as a locally running application, while retaining the ability to take advantage of storage and computational resources available on the servers. Multidimensional images are treated as a collection of independent frames, that may be simultaneously processed. Visualization may highlight various aspects of the image data, by using a variety of display mode. The modular structure of the application permits the distribution of the various parts of the same job on different machines, thus assuring speed and low latency for operations involved in page redrawing. In a parallel environment, a large number of frames, requested by one or more users, may be calculated at the same time on different cluster nodes. Currently several processing filters have been included, by taking advantage of adapters, developed for ImageMagick and PDL libraries as well as for PHP internal image functions. Most point or area processing filters are available, as well as tools for modifying image geometry and a number of specific processing steps acting on the image as a whole, such as reslicing, projection or deconvolution. The integration of image analysis tools allows to easily produce and visualize, in text or graphic formats, histograms or other statistic measurements. A specific set of tools, independently developed for studying cell movement, is also being adapted to work within this environment.